The ‘mosaic approach’ at UTS is a whole‑of‑course approach to assessment design that builds confidence that students have achieved the course intended learning outcomes (CILOs) through a combination of complementary assessment strategies across a course, rather than relying on any single secure assessment task.

The visual metaphor of a mosaic is intentional. Just as individual tiles in a mosaic come together to form a complete picture, different assessment tasks, contexts and levels of security combine across the course to create a valid and accurate picture of student learning and progression against the CILOs.

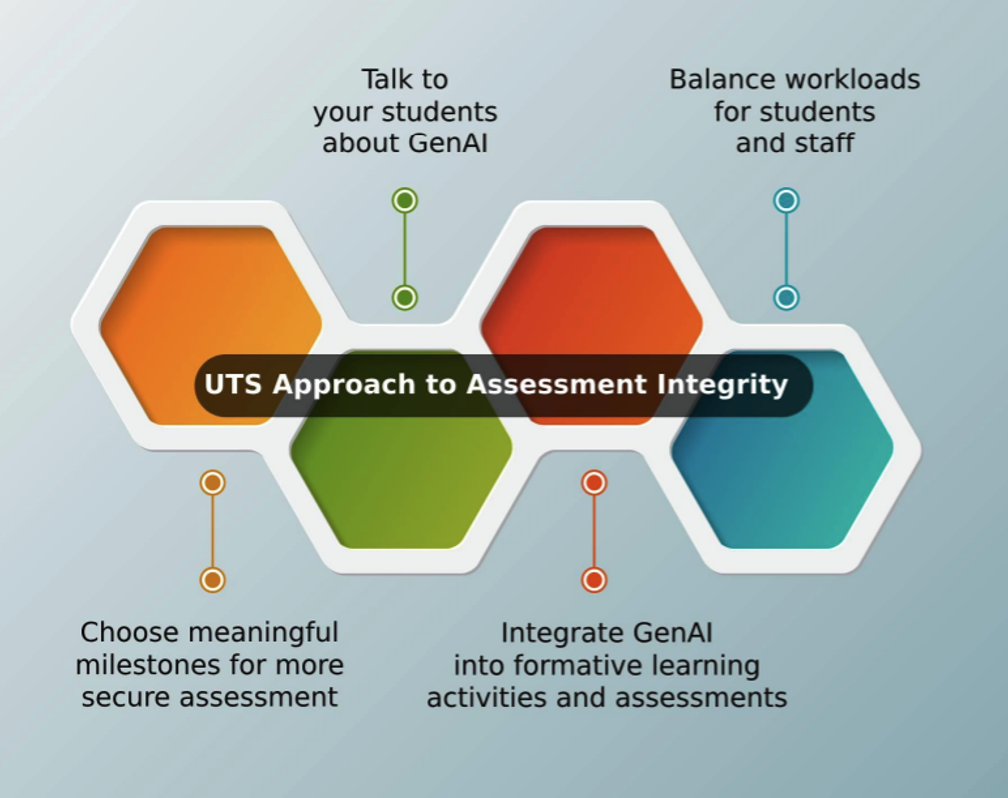

There are 4 key aspects to the mosaic approach: Choose meaningful milestones for more secure assessments; Talk to your students about GenAI; Integrate GenAI into formative learning activities and assessments; and Balance workloads for students and staff.

1. Choose meaningful milestones for more secure assessments

Assessment security at UTS should be achieved through thoughtful design across time and context. Course teams will identify key points across a course where more secure (often real‑time or interactive) assessments are used to directly assure achievement of learning outcomes. These are intentionally balanced with other assessment tasks that may be more open, developmental or authentic, including tasks where ethical and critical use of GenAI is appropriate. Taken together, these elements strengthen confidence in learning outcomes while supporting good assessment practice, student experience and wellbeing.

2. Talk to your students about GenAI

Course teams need to ensure there is a consistent and coherent GenAI position statement for the course communicated at orientation, or the first class and revisited at each year level or stage. This statement should not simply list rules; it should explain the reasoning — why certain assessments permit GenAI, and others don’t, and how this connects to the CILOs. Students who understand the rationale are far less likely to misuse tools and far more likely to engage authentically.

Each new subject needs an explicit conversation about GenAI expectations for that subject, including how those expectations may differ from other subjects in the course. A short activity could work well — for example, asking students to discuss where they think GenAI could legitimately help them in this subject and where it would undermine their learning. This surfaces assumptions early and builds shared norms.

3. Integrate GenAI into formative learning activities and assessments

Course teams need to identify subjects at each year level where GenAI use is explicitly and intentionally scaffolded into the learning design and assessment. This prevents a situation where students experience an incoherent patchwork of ‘allowed’ and ‘not allowed’ without understanding the underlying educational purpose. Ideally, courses should show a developmental arc.

At the subject level, formative activities that incorporate GenAI are included as per the course design. These activities serve a dual purpose — they build the critical AI literacy that students will need as graduates, and they make it much harder for students to confuse GenAI output with their own learning.

4. Balance workloads for students and staff

Course teams need to audit the overall assessment load across the course each year. A common finding is that assessment has accumulated over time — individual academics add tasks in good faith, but the cumulative effect on students is overwhelming. Students should be able to engage meaningfully with each assessment task given the credit point weighting of the subject; if this is not realistic, the course has too many tasks and quality and integrity will suffer. Reducing the number of assessments and increasing the weight and sophistication of fewer tasks is generally better for both learning and integrity.

Designing clear, focused marking criteria and using structured feedback templates can make marking more manageable. Peer review and self-assessment, when well-scaffolded, can also distribute some of the feedback load while deepening student engagement.