Authored by Behzad Fatahi and Karen Whelan.

Prefer to listen first, read later? This video podcast offers an alternative experience and highlights the key themes in our blog. Read on to see how we worked with our AI teammate to get it done.

Higher education is at a critical moment in assessment design. The key issue is no longer whether students use generative AI, but how assessment should be designed when GenAI is available 24/7.

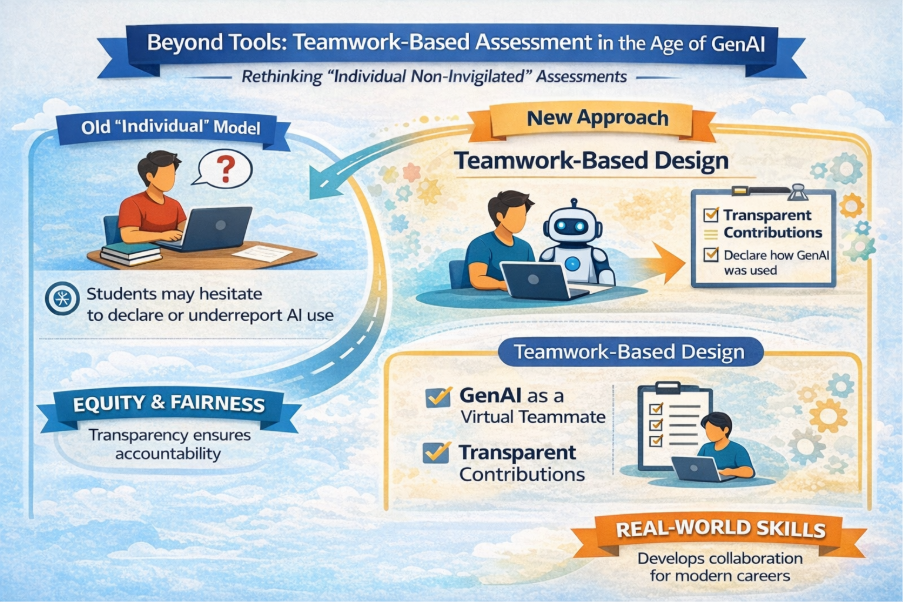

For any assessment task that is not invigilated or supervised, students have continuous access to platforms such as ChatGPT, Gemini, Copilot, and similar systems. This is now a structural condition of learning rather than an exception. Yet many such tasks are still labelled and assessed as individual, implicitly assuming that students have completed the work alone. In some cases, educators ask students to declare whether they have used GenAI in an individual task. However, this approach is often ambiguous. When a task is framed as individual, students may hesitate to report GenAI use, or may underreport the extent of its use, because collaboration, even with AI, feels misaligned with the assessment label. As a result, this assumption is becoming increasingly difficult to justify pedagogically, ethically, and practically.

A new pedagogical challenge, or just more visible now?

Higher education has long dealt with the challenge of collaboration in assessment. International scholarship on group work and collaborative assessment, particularly the work of Boud and Bearman, has consistently shown that meaningful learning in complex, professional disciplines occurs through collaboration, and that assessment should make this collaboration visible rather than hidden.

GenAI has not introduced collaboration into learning; it has simply made collaboration unavoidable. The pedagogical question, therefore, is not how to eliminate collaboration, but how to design for it responsibly.

A necessary shift in assessment design

If GenAI is always available in non-invigilated contexts, then a more coherent response is to design these tasks explicitly as team-based assessments, recognising that one of the collaborators may be a virtual one.

This does not remove individual accountability. Decades of research on group assessment show that accountability is best achieved not by denying collaboration, but by structuring it transparently.

In this form of teamwork, the student is not a passive participant but the team leader. The student retains full responsibility for planning the task, setting goals, allocating work (including how and where GenAI is used), integrating outputs, exercising judgement, and making final decisions. GenAI does not lead, decide, or take responsibility; it supports the group work as a teammate. Oversight, direction, and accountability remain firmly with the student who is the team leader.

At the heart of this shift are two simple ideas.

1. Treat GenAI as a virtual teammate, not a tool

GenAI is often described as a tool, similar to a calculator or software. While convenient, this framing underestimates how GenAI functions in practice.

Invigilated individual assessments provide a useful lens. In these tasks, students may be allowed to use tools and passive resources such as notes, textbooks, or programmable calculators, but one rule is universal: students cannot receive help from another person. If GenAI were simply a tool, it could logically be permitted in invigilated exams. Yet it is widely prohibited, not because of the technology itself, but because of what it does.

GenAI explains ideas, generates reasoning, drafts responses, interprets problems, and co-creates solutions. These behaviours resemble those of a collaborator, not a passive resource. Seen this way, excluding GenAI from invigilated tasks is entirely consistent with long-standing assessment principles.

This suggests that the common message “GenAI is just another tool” oversimplifies its role. A more accurate framing is that GenAI operates as a virtual teammate, sometimes helpful, sometimes misleading, and always requiring judgement. Importantly, however, the student remains the team leader, responsible for critically evaluating all GenAI outputs used in their process.

This framing allows educators to draw on established teamwork assessment frameworks, rather than inventing AI-specific rules that overlook decades of research on collaboration and assessment.

2. Design non-invigilated assessment using group-work principles

For non-invigilated tasks, assessment design should explicitly reflect the reality of collaboration. This can be achieved by applying well-established group-work assessment principles, including:

- assessing both the quality of the final product and the process of collaboration

- requiring students to declare the contribution of each group member, including GenAI

- incorporating reflective components that focus on judgement, decision-making, and responsibility

- maintaining individual accountability through transparent contribution statements and weighting

Within this framework, the student’s leadership role becomes assessable: how they planned the work, managed the collaboration with GenAI, guided the process, and demonstrated ownership of the outcomes.

These practices are already widely used internationally and are well understood by students and educators alike.

Equity through transparency, not prohibition

Concerns about equity, particularly differences in students’ access to more or less advanced GenAI platforms, are real. However, research on group assessment suggest that equity is not achieved by assuming identical inputs, but by ensuring transparent accountability for contribution and judgement.

When students must explain how GenAI contributed to their work, how they directed its use, and how they accepted, modified, or rejected its outputs, assessment focuses on what truly matters: the student’s leadership, evaluative capability, and professional judgement. In this way, transparency becomes a mechanism for fairness rather than a liability.

Alignment with professional and accreditation expectations

This approach aligns strongly with professional accreditation frameworks. Engineers Australia Stage 1 Competency 3.6 requires graduates to demonstrate effective team membership and leadership, including working in diverse teams, valuing alternative viewpoints, exercising judgement, and seeking expert advice. Treating GenAI as a virtual teammate is not intended to replace the competency requirements for teamwork with human peers, but to complement them, reflecting the reality that contemporary professional teams increasingly include both human and virtual teammates. Positioning the student as the leader of a team that includes GenAI, directly supports these leadership and responsibility competencies, while also highlights the potential need for an additional and increasingly important competency, covering the ethical, appropriate, and professional use of GenAI as a teammate.

Similarly, the Australian Computer Society emphasises teamwork, collaboration in virtual environments, conflict resolution, and professional judgement. Designing assessment that reflects collaboration with digital agents mirrors contemporary professional practice rather than an artificial separation between “human” and “AI” work.

Looking ahead

GenAI has not broken assessment. What it has exposed is a growing mismatch between how non-invigilated assessment is labelled and how learning actually occurs. Pretending that students work alone when collaboration is structurally present undermines authenticity and fairness.

By treating GenAI as a virtual teammate, with the student explicitly positioned as the team leader, and by designing non-invigilated assessment using established group-work frameworks, higher education can respond to GenAI in a way that is pedagogically rigorous, equitable, and aligned with professional practice, without reinventing the wheel.